An interesting article called The sentiment at Legalweek 2017 by Angela Bunting, Director of eDiscovery at Nuix, considers the value of sentiment analysis tools.

An interesting article called The sentiment at Legalweek 2017 by Angela Bunting, Director of eDiscovery at Nuix, considers the value of sentiment analysis tools.

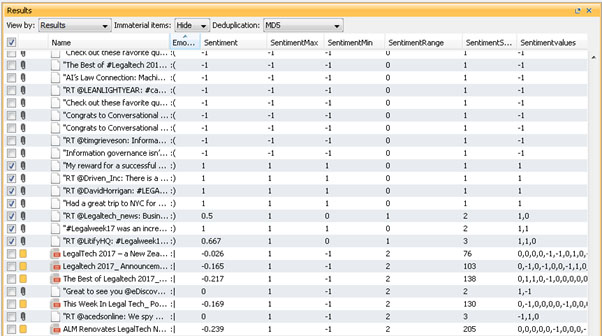

Angela Bunting took data relating to Legaltech – tweets with relevant hashtags and blog posts – and put it into software which purports to analyse sentiment. The software’s conclusion was that the material was overwhelmingly negative, which does not accord with her own sense of what was said about the show.

As Angela says, cultural variations, slang, irony and sarcasm all raise difficulties when subjected to computer analysis. Looking at my own article about Legaltech, which was largely enthusiastic about it, I can see that some of the content and phrasing, not least the passages in which I write about the reported reactions of others, might lead to the overriding conclusion by sentiment analysis software that I was unimpressed. A human would, (I hope) understand that I set out the objections in order to knock them down. Legaltech is a tall poppy and, as I observed in the article, some people do like to moan about it; that instinct, when added to more justified criticism, might well give sentiment analysis tools the “wrong” idea because they lack the ability to overlay their conclusion with understanding of the human motive behind some of the inputs.

None of this is to deride the importance, or potential importance, of sentiment analysis. Provided that it is coupled with a human overview, it offers the potential for useful interim conclusions such as whether a company’s employees or customers are satisfied or not. Human common-sense is required – analysis of the contents of a complaints hotline will almost certainly reveal an overwhelmingly negative conclusion because of the nature of the source.

We already have analytical tools designed to show up things which do not necessarily appear from the bare words used by the participants. At a relatively simple level, for example, the words “Let’s continue this off-line” are generally taken to be an indicator that there is another, and potentially more interesting, strand of discussion going on elsewhere and off the record.

Insurers are said to use something equivalent to sentiment analysis to decide, or at least to suggest, that someone reporting an incident is lying.

We still need humans to check, override or draw conclusions from data. One of my children’s maths teachers once said that the ability to do sums in one’s head was less important than the ability to sense whether the answer is broadly what you would expect. By all means use a calculator, he said, but have the wit to notice if the decimal point has been put in the wrong place. Much the same applies to data subjected to predictive analytics or sentiment analysis: a result which confounds your expectations may be an evidential breakthrough; it may equally be the result of a mistake.

As with so many things, we must keep alert for developments in areas like this, know what they cost, what they do and don’t do, and what they might achieve, so that when relevant case turns up, we don’t overlook them as a source of evidence.