Dr David Grossman is Associate Director of the Georgetown Information Retrieval Library and an Adjunct Professor of Computer Science at the Illinois Institute of Technology. He has recently written a paper called Measuring and Validating the Effectiveness of Relativity Assisted Review which, in four easily-read pages, discusses the accuracy of the Relativity Assisted Review Technology and workflow. Although, as the paper’s name implies, a specific proprietary tool was used for the validation exercises, the principles set out in it are useful for anyone contemplating the use of technology assisted review for eDiscovery / eDisclosure.

Before looking at the paper itself, it is worth considering what objective a lawyer has in mind when using any technology to conduct any discovery exercise. In essentials, it is an information retrieval exercise similar to many others – the stakes are high, but no higher than apply to, for example, analysis of medical data as a preliminary to the launch of a new treatment or medicine, or of stress data as part of the design of a new aeroplane. Life or death turns on the outcome of such exercises which depend on collecting such data, sampling, and analysing it, and testing the results within margins of error which vary with the purpose.

The most conscientious lawyer would not claim that the identification of relevant documents for eDiscovery is more important than the testing of medicines or aeroplanes, yet they seem noticeably reluctant to put their faith in the statistics which underlie such things. What is so special about eDiscovery?

Two other factors need to be considered in looking at the accuracy of information retrieval systems used for discovery. One is the question whether there are better ways of doing the job – do you get a better (that is, more accurate) result by any other method? The other is the standard to which you aspire – nothing in the rules of the US or UK civil jurisdictions requires perfection (indeed, that has been expressly refuted in case law in both jurisdictions) and we certainly do not expect a standard as high as is required in the evaluation of medical treatments or heavier-than-air flying machines destined to carry hundreds of people.

This introduction does not imply that a low standard is acceptable in eDiscovery / eDisclosure. Professional duty, the fear of court punishment and the hope of finding the killer document all emphasise the need for lawyers to be satisfied that they have done a proper job. It is true to say, however, that electronic discovery is not a special case requiring some exceptional level of accuracy. The tools and methodologies used to draw conclusions from data are well-established across across a wide range of commercial, industrial and scientific disciplines.

Since Dr Grossman’s paper uses Relativity Assisted Review as its test-bed, what does kCura say about its tool? The web site description is this:

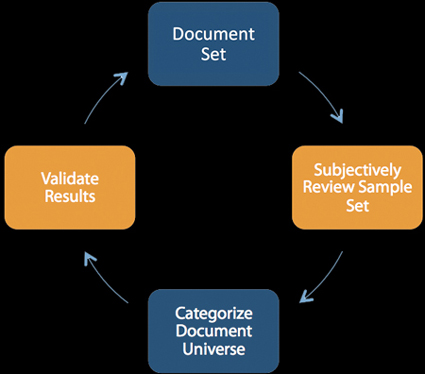

Assisted Review combines technology and process, allowing you to train Relativity on key issues by coding subsets of documents. It requires three main components—domain expertise, an analytics engine, and a method for validation.

Review teams begin by subjectively coding a sample set of documents. Based on those expert decisions, Relativity determines how the rest of the documents in a case should be marked. You then validate Relativity’s work by manually reviewing statistically relevant subsets of documents to ensure coding accuracy.

Because batches of documents categorized by Relativity are validated using statistics, you can be confident in your results.

It is that closing assertion which is the subject of Dr Grossman’s paper, that is, he addresses the question “how can you be confident in your results?”. A four-page, lucidly-written paper needs no summary from me, but briefly the goals were to get answers to three questions:

- Q1: Does sampling work to estimate the total responsive and non-responsive documents in a collection?

- Q2: Does Assisted Review’s reporting functionality accurately reflect the true number of defects in the document universe? In other words, once categorization is completed, how well does a sample reflect the accuracy of the categorization that was just completed?

- Q3: Does the Assisted Review process improve effectiveness?

The methodology involved taking five topics, a total of 20,000 documents, from the TREC Legal Track which had already been manually assessed. The Relativity Assisted Workflow was run five times, each time with two training rounds. Samples were taken of both responsive and non-responsive documents – note that it is as important for lawyer comfort purposes to check both – and the results were compared with a control set of categorised documents.

The conclusions were positive – Assisted Review reporting using sampling accurately reflected the full population, and document categorisation improves with each round, with an improvement in effectiveness with each round.

The positive results are important. Lawyers think of technology-assisted review as risky when compared with their traditional ways of searching. The truth is that they have no verifiable way of measuring the accuracy of traditional searches without the ability to conduct tests of the kind described in Dr Grossman’s paper and (and this is crucial) to do so in a reasonable time and in a manner which is capable of demonstration to others. If technology solutions like Relativity Assisted Review are offering validation routines of the kind covered in Dr Grossman’s paper, then lawyers should surely be considering the use of tools like this.